Achieving 99.5% Data Accuracy: The AnomIQ Redundancy Architecture

When building a quantitative monitoring terminal, your mathematical models are only as reliable as your data pipeline.

If you are calculating sub-minute rolling windows and standard deviations (Z-Scores) to detect institutional order flow, missing even a 3-second burst of data packets completely corrupts the resulting analysis.

The reality of the digital asset data industry is that many entry-level screeners rely on a single server connection. However, in distributed systems, network latency is inherently unreliable.

AnomIQ uses distributed architecture to achieve 99.5% tick-level coverage.

The Problem: Data Latency and Connection Fragility

Major exchanges stream real-time execution data via WebSockets. While this is the fastest delivery method, it is fragile for three reasons:

- TCP Packet Loss: Data travels across global fiber-optic routes. During high-traffic periods, packets are frequently dropped.

- The “Zombie” Connection: During periods of massive market volatility, broadcast engines may prioritize execution over data streaming. A connection may remain “open” while no data is actually flowing.

- The Reconnect Gap: When a connection drops, the time required for a new TCP handshake creates a “blind spot” where critical data is permanently lost.

If a large-scale institutional move occurs during a connection gap, standard data tools fail to register the event.

The AnomIQ Solution: 6x Redundancy & Active Watchdogs

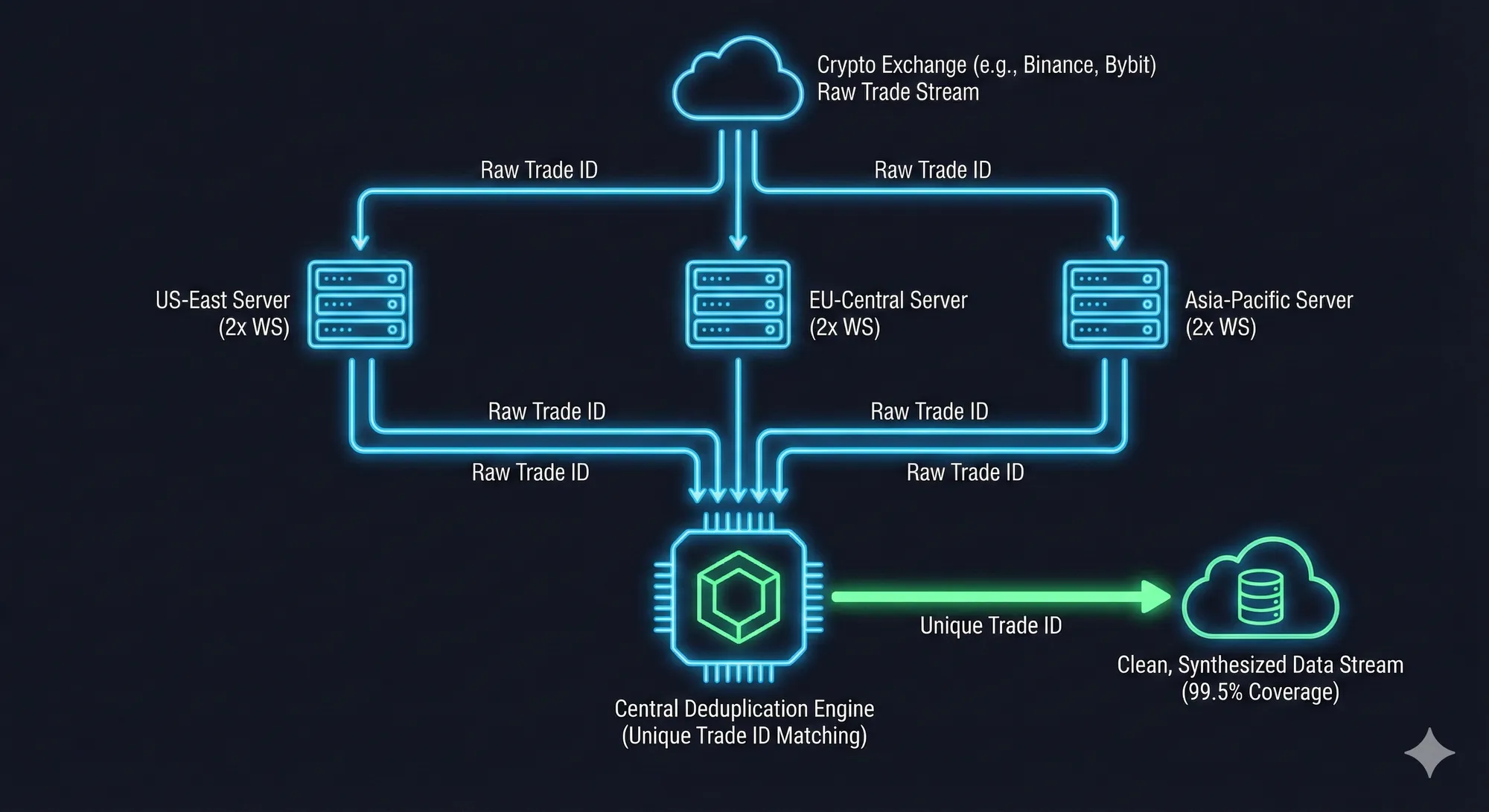

To solve the “reconnect gap” and packet loss, AnomIQ utilizes a geographically distributed architecture rather than a single data pipe.

- Geographic Distribution: We operate three independent ingestion nodes located in different global data center regions.

- Stream Isolation (Batching): Instead of one massive stream, AnomIQ isolates assets into discrete batches. If one connection encounters an error, the rest of the market monitoring remains unaffected.

- Symbol-Level Dual-Piping: Every server node maintains two concurrent WebSocket connections per batch.

- The 5-Second Watchdog: We monitor the data flow itself. If any connection sits idle for more than 5 seconds during active market hours, our system automatically terminates and restarts that specific socket to ensure zero-latency delivery.

The result: With 3 servers and 2 connections per batch, AnomIQ ingests every data point six different times across varied network routes.

Server-Side Deduplication: Synthesizing the Truth

While 6x redundancy solves the “missing data” problem, it creates duplicates. Our processing engine must synthesize these streams into a single “Source of Truth.”

As data floods in, our engine performs sub-millisecond deduplication based on three exact parameters:

Exchange IdentityAsset PairUnique Transaction ID(The immutable ID assigned by the exchange matching engine)

The engine discards duplicates instantly, transforming redundant network traffic into a mathematically pristine stream of exactly once delivery.

Verification: The 99.5% Accuracy Benchmark

We audit our performance by comparing our real-time captures against official exchange ledger archives (CSV dumps).

By running differential scripts against these source-of-truth files, we verify our capture rate. We claim 99.5% accuracy because, in quantitative engineering, claiming 100% is unrealistic due to global exchange maintenance windows. However, by benchmarking against official ledgers, we ensure that our architecture captures the metrics that matter most to our users.

For the anomaly layer that runs on top of this pipeline, seeHow to Detect Crypto Volume Anomalies in Real Time (Binance USDT + Coinbase USD).