Z-Score in Trading: Detecting Statistical Anomalies in Market Activity

Financial markets produce an enormous stream of data every second. Trades execute, volumes fluctuate, and price moves continuously. For quantitative analysts and algorithmic traders, the real challenge is not accessing data, it is identifying when something statistically unusual is happening.

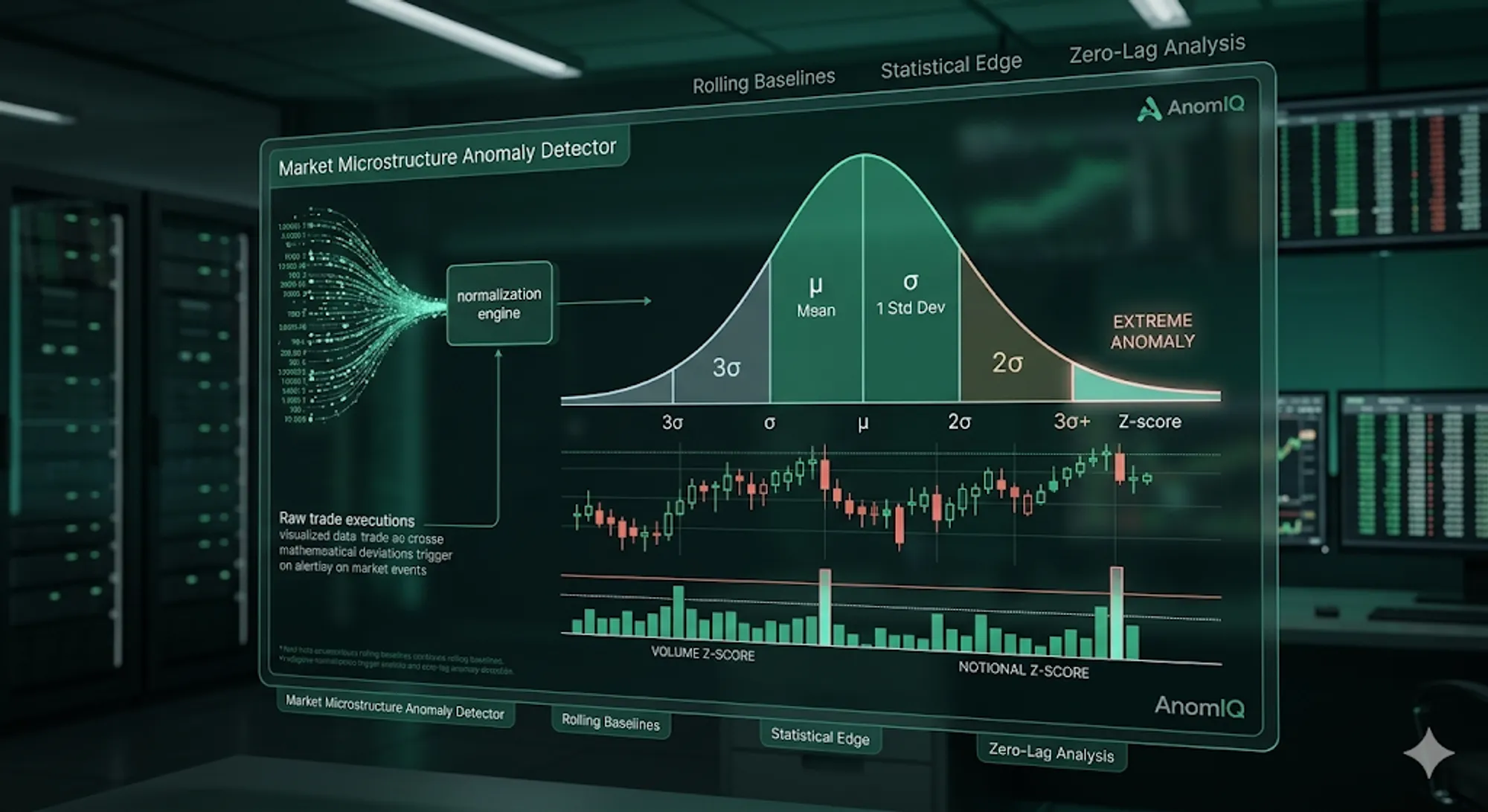

This is where the Z-Score is the right tool for market microstructure analysis.

Z-score allows us to answer a simple but critical question:

Is the current market activity normal, or is it statistically unusual compared to its recent historical baseline?

Instead of relying on subjective chart inspection or lagging indicators like RSI, Z-score provides a purely objective, quantitative way to measure anomalies in order flow.

What Is a Z-Score in Trading?

In statistics, a Z-score (or standard score) measures how far a specific data point deviates from the historical average, expressed in standard deviations.

The math is calculated as: Z = (Current Value - Mean) / Standard Deviation

Where:

- Current Value: The latest real-time measurement (e.g., 1-minute volume, trade size)

- Mean: The historical average of that specific metric

- Standard Deviation: The measure of how much the metric normally varies (volatility)

For trading applications, the interpretation is straightforward:

| Z-Score | Statistical Meaning | Market Interpretation |

|---|---|---|

| 0 to 1 | Average | Normal background noise. |

| 1.0 to 2.0 | Elevated | Slightly above normal activity; worth monitoring. |

| 2.0 to 3.0 | Highly Unusual | Top 5% of historical activity. Significant structural shift. |

| > 3.0 | Extreme Anomaly | Top 0.3% of activity. A massive liquidity event or institutional execution. |

A Z-score of 3.0 or higher means the event is a mathematical outlier. In market analysis, this almost always indicates a strong shift in trading participation, aggressive taker flow, or sudden liquidity absorption.

Why Z-Score Beats Raw Volume Metrics

Raw metrics like volume or trade count are mathematically blind.

For example, $2 million in 5-minute volume might be a massive breakout indicator for a mid-cap altcoin, but it is completely insignificant background noise for Bitcoin.

Z-score solves this problem by normalizing activity relative to the instrument’s own historical behavior.

Instead of asking, “Is this a lot of volume?” you are asking, “Is this volume statistically abnormal for this specific asset at this specific time of day?” This dynamic normalization is what makes it possible to run a market scanner across hundreds of instruments simultaneously without getting drowned in false positives.

Core Z-Score Metrics for Order Flow

A high-fidelity market scanner applies Z-score normalization across multiple dimensions of the tape. Each reveals a different facet of market mechanics.

1. Volume Z-Score

Measures whether traded volume is unusually high relative to historical baselines.

- Z-Score > 3.0: Indicates a rare surge in market participation. These deviations often coincide with rapid repricing, news events, or sudden algorithmic execution.

See it in action: You can visualize these exact statistical deviations minute-by-minute using the Volume Anomaly Histograms in our newDeep Dive 2.0 dashboard.

2. Notional Volume Z-Score

Measures the absolute dollar value (capital flow) of trades executed, filtering out low-value retail noise.

- If Notional Z-score is high (e.g., 3.2) but the raw Trade Count Z-score is low (e.g., 0.9), large capital is moving through very few transactions — a measurable footprint of institutional execution and block-style order flow.

3. Directional Z-Score (Buy vs. Sell Volume)

Directional Z-scores separate aggressive taker-buys from taker-sells, providing insight into which side of the market is dominating the tape.

- If Buy Volume Z-Score is

3.5and Sell Volume Z-score is0.4, the anomaly is heavily skewed toward buyers. Tracking this directional asymmetry helps analysts identify aggressive accumulation or distribution phases as they develop in the tape.

The Importance of Zero-Lag Rolling Baselines

Z-scores are only as accurate as the mean ($\mu$) they are measured against.

Static baselines (like a fixed daily average) quickly become outdated as intraday volatility shifts. To generate institutional-grade signals, quantitative systems use continuous rolling statistical windows.

This means the mean and standard deviation update tick-by-tick as new data arrives, providing two massive advantages:

- Adaptive Normalization: The baseline instantly adjusts to changing market volatility regimes. If you want to know the engineering challenges behind calculating this math without server lag, read our breakdown on why perfect math broke our real-time scanner.

- Zero-Lag Detection: Anomalies are flagged the exact second they occur, rather than waiting for an hourly candle to close.

Modern quantitative scanners compute these rolling baselines across multiple timeframes (e.g., 5M, 15M, and 60M) to detect both micro-vacuums and macro-structural shifts.

If you want the exact production implementation (including how volume, trade count, and trade size are computed side by side across 5m/15m/60m), read How to Detect Crypto Volume Anomalies in Real Time (Binance USDT + Coinbase USD).

Automate Your Statistical Anomaly Detection

Statistical anomaly detection is not a trading strategy on its own, but it is the foundational framework for discovering the exact moments where market behavior deviates from the norm. Those deviations are the starting point for structural analysis.

However, calculating rolling standard deviations across hundreds of assets manually is impossible.

AnomIQ was built to automate this exact math. Our real-time engine ingests raw tick data and applies continuous Z-score normalization across volume, trade count, and notional flow. You can easily translate these metrics into automated alerts by using our pre-built scanners or custom multi-timeframe filters.